dgen-xr

* Some details will be omitted for proprietary reasons.

Project Overview

DGEN-XR is one of the intern projects I developed during my time at NASA's Graphics & Visualization (GVIS) Lab.

The goal was to explore how extended reality (XR) could support aeronautics research, particularly in collaboration with an acoustic engineer studying jet engine noise.

After demonstrating the initial MVP, the project evolved into a scalable R&D tool that enables users to analyze acoustic effects of user-defined parameters on the DGEN engine. I collaborated with engineers, researchers, technical leads, and project managers.

Role

Time Frame

Technology

XR Developer

2 months

Unity, C#, Meta Quest 3

My Impact

e Introduced a 3D visualization approach that supplements traditional methods of analysis where data is studied in 2D graphs and charts. For the first time, users can simulate being in the same test environment and experience the acoustics in a spatialized setting.

e The application will be incorporated into their early career training for interns and newcomers to familiarize themselves with the engine.

e DGEN-XR has since been released for internal use within NASA. Acoustic engineers and researchers can run through past tests and interact with the engine virtually. This virtual experience significantly reduces the costs and time of shipping individual engine parts to collaborators.

e I was invited to present the project at an agency-wide technical talk, which helped my team gain visibility at other NASA centers. I explained the feasibility of adopting XR for research, and exposed my team to new clients.

Project Context

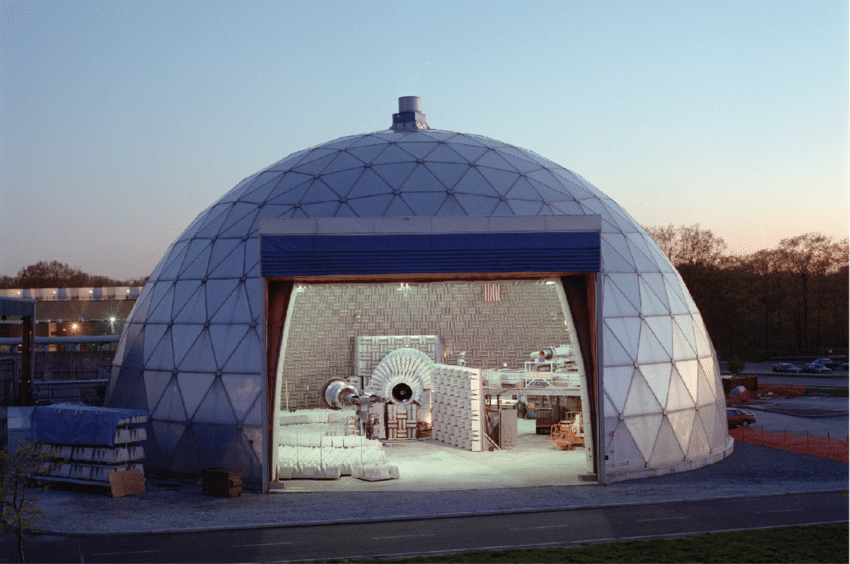

I worked with the team that conducts aero-propulsion noise-reduction research in the Aero-Acoustic Propulsion Laboratory (AAPL).

The engine of our focus was DGEN380, a small turbofan that is representative of larger and more modern turbofan engines, enabling multi-discipline research.

This is precisely what DGEN-XR aims to support: a versatile AR/VR tool that accommodates users of various roles and goals. The app targets MetaQuest 3 headsets, however it was designed to be compatible with most Android-based builds.

The Application

Engine setup in AAPL

Engine setup in DGEN-XR

Spatialized Audio

There are 30 audio sources surrounding the engine, each holding the digitized audio synthesized from microphone time histories acquired during testing.

These audio sources were positioned programmatically and generated at runtime, based on their documented spherical coordinates translated into Unity's coordinate system.

This approach reduces manual placement of gameobjects and enables flexibility if any part of the scene changes.

The sound attenuation curve shows how volume falls off with distance, and this logarithmic rolloff is meant to mimic how we experience real-world audio fading.

Each audio source can be examined in isolation, and the user interface displays relevant metadata regarding its position.

Engine Configuration

When an engine component nears close enough to the turbofan, the engine's internal parts become visible (Fresnel Effect) so that the user can identify where to position the component.

It is not necessary to know where each component belongs, as they will snap into place if within the threshold.

Engine components are considered parameters to the simulation, as they affect engine acoustics. Other factors such as the throttle position (also adjusted by the user) will affect the audio.

Next Steps

The big picture goal is to make DGEN-XR a collaborative XR tool that allows users to observe past tests, experiment with new ideas, and experience the acoustic signature of the DGEN turbofan.

Some ideas for improvement are:

- Incorporating multiplayer networking to allow multiple users to collaborate in real-time.

- Optimizing 3D meshes

- Gather more user feedback to make the UI more intuitive for first-time VR users.

Thoughts

My favorite part of this project is how problem-solving intensive it was. There were many requirements to fulfill as the project is inherently data-driven where accuracy is crucial, but visual appeal and performance in a XR application is equally important.

This project was a perfect combination of fun and challenging. I had the opportunity to touch on many areas within VR development such as the graphics pipeline, user interface, 3D features, and visual aesthetics within the bounds of functionality.

My managers and I did not expect the project to receive much appreciation from the engineering and research teams, and I think the unique opportunity to present my ideas proved this project to be very impactful. This is special to me because I am very results-driven, and I know that my work will be useful despite the internship being over.

Also, here are some photos of my time at NASA when I volunteered at STEM outreach events!